CitySearch AI: LLM & RAG-Powered Decision Intelligence System

A selection of end-to-end data science projects focused on business analytics, risk modeling, and decision intelligence, covering the full lifecycle from data engineering to model deployment.

"We turned complex city data locked in databases into a natural-language, decision-ready AI system anyone can query."

Project Overview

CitySearch AI is a hybrid AI-powered decision intelligence system that allows users to explore and compare U.S. cities using natural language questions, rather than rigid filters or SQL-style queries.

The system is designed to bridge the gap between structured city data and unstructured lifestyle context, enabling accurate, explainable, and fast answers to real-world questions such as:

● Which cities are best for families?

● What is life actually like in Austin?

● Which cities are similar to Seattle but more affordable?

This project focuses on production-grade architecture, prioritizing performance, cost efficiency, and reliability over generic LLM usage.

Problem Statement

City-level data already exists in databases, but it is often:

● Locked behind rigid filters and dashboards

● Limited to numeric aggregates

● Inaccessible to non-technical users.

At the same time, relying purely on general-purpose LLMs to answer city-related questions introduces:

● Hallucinations

● Lack of grounding

● Inconsistent answers

People don’t think in filters or SQL. They ask real questions.

Traditional analytics tools and naïve LLM integrations fail to handle this reliably.

Solution: RAG-Based Hybrid Query & Retrieval Strategy

CitySearch AI is built as a hybrid AI system that combines:

● Deterministic SQL queries for structured, factual data

● Retrieval-Augmented Generation (RAG) for contextual and lifestyle insights

● Intelligent query routing to select the most reliable execution path per request

The result is an AI system that delivers accurate, fast, and grounded answers, without overusing LLMs.

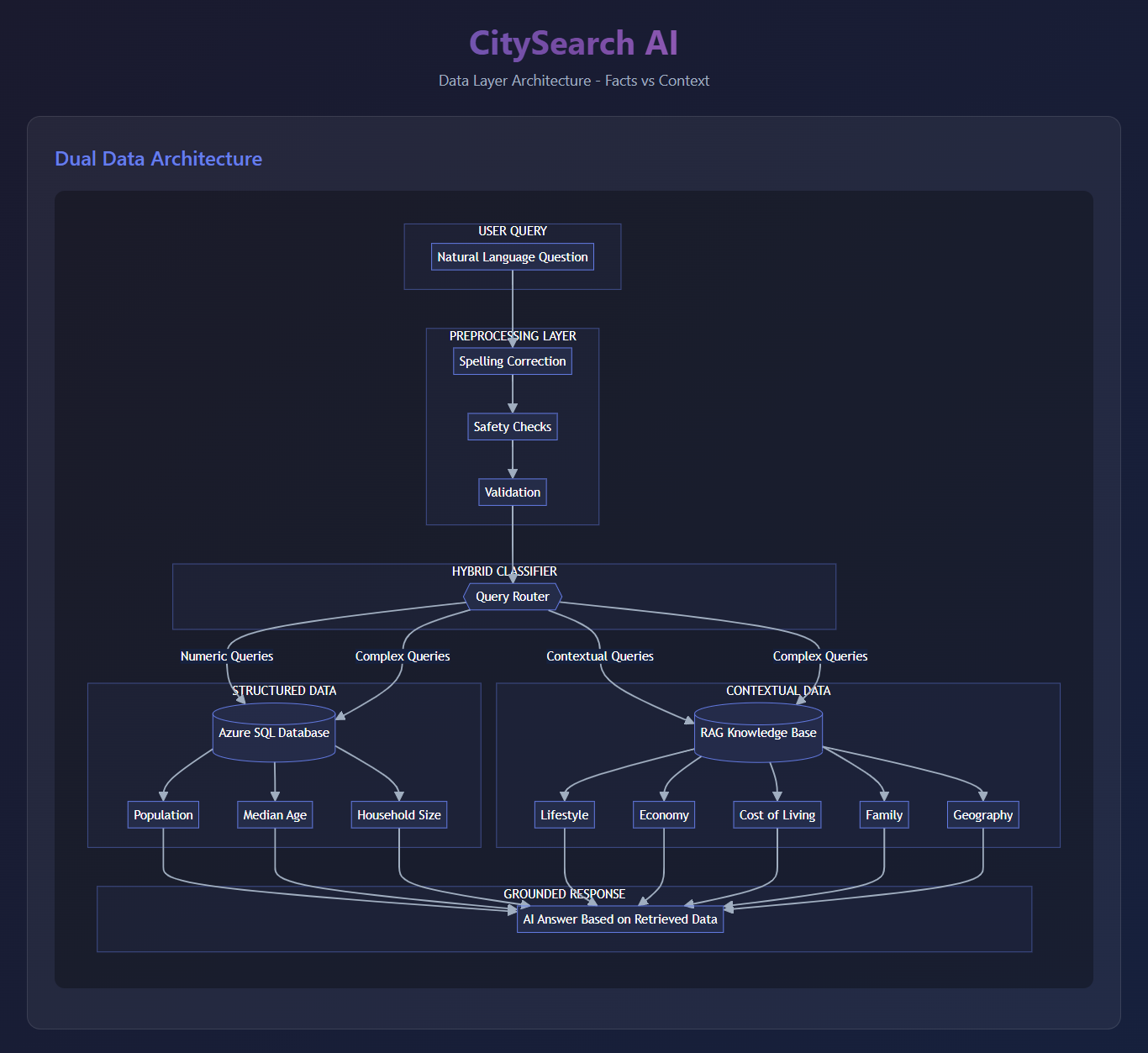

System Architecture (High-Level)

Separation of Facts and Context

The system explicitly separates what can be computed from what must be described.

Structured Data Layer (Azure SQL)

● Population

● Median age

● Household size

● Numeric aggregates and filters

This layer provides fast, deterministic responses for all factual and aggregate queries.

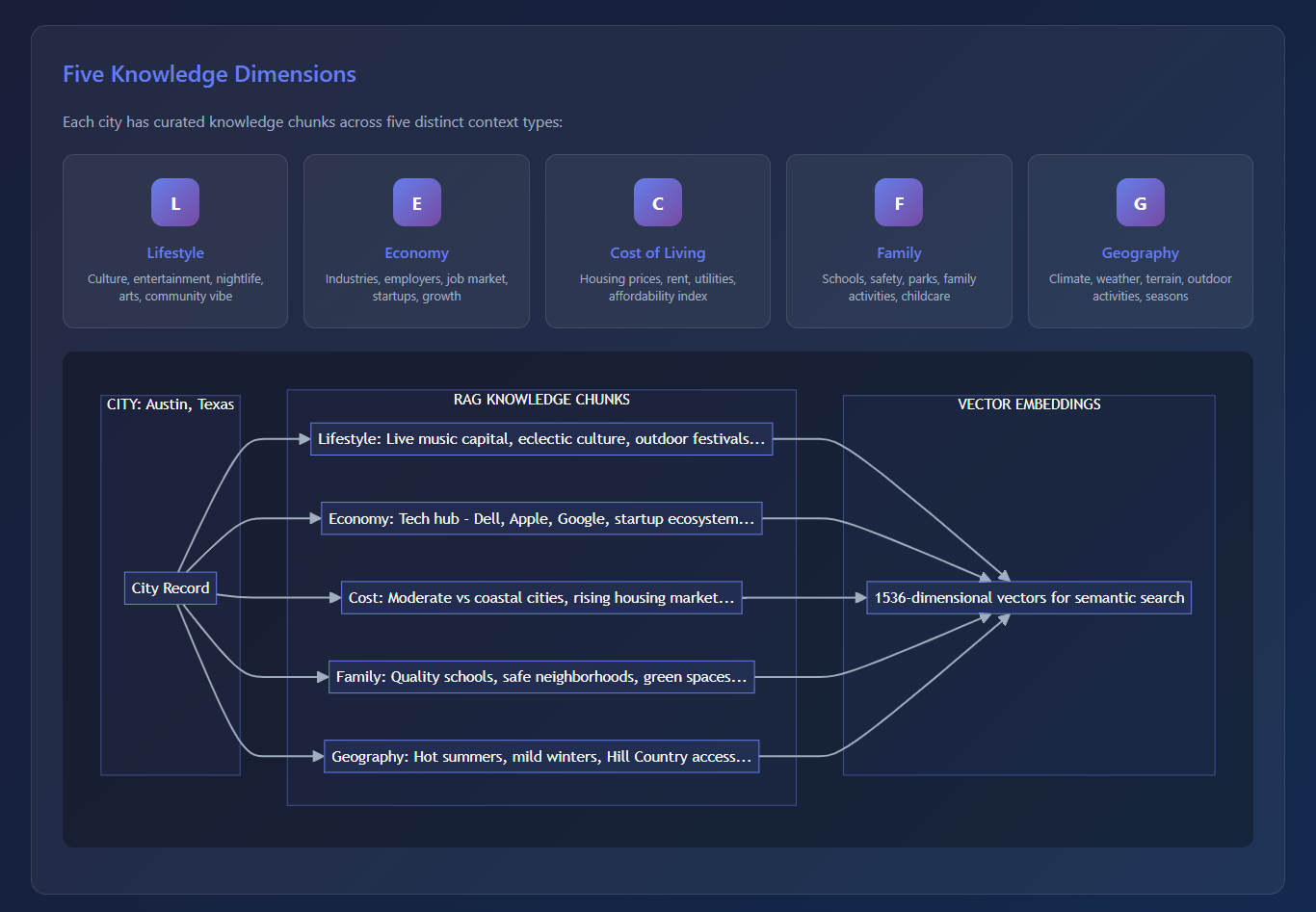

Contextual Knowledge Layer (RAG)

Cities are not just numbers. Concepts such as, Lifestyle, Economy, Family-friendliness, Cost of living, Geography do not exist in tables. To handle this, CitySearch AI uses a RAG layer where each city is represented by curated knowledge chunks across five dimensions:

● Lifestyle

● Economy

● Cost of living

● Family

● Geography

All LLM responses are grounded strictly in retrieved context – not model memory.

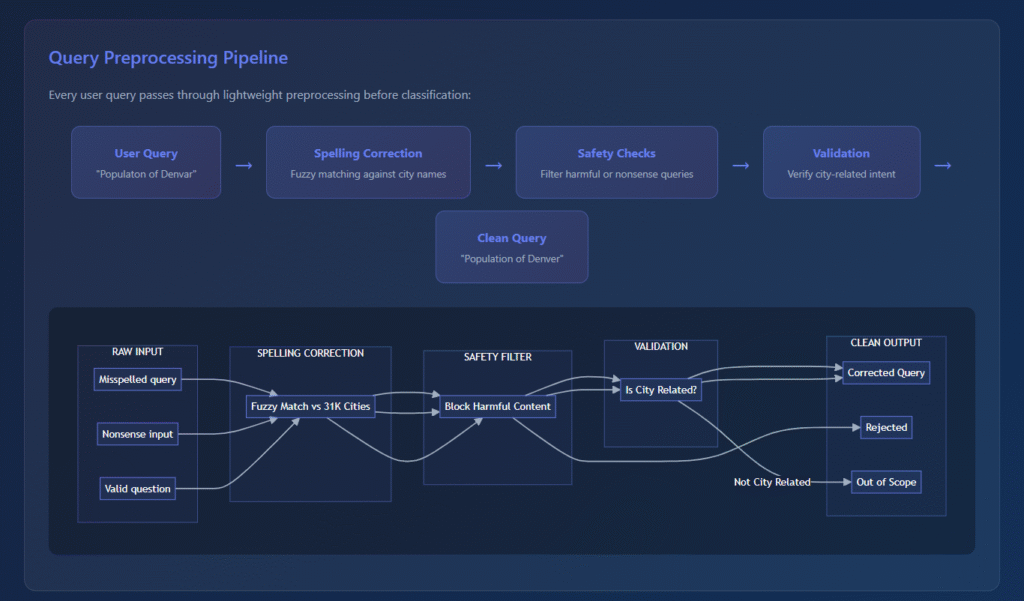

Intelligent Query Classification & Routing

Every user query first passes through a lightweight preprocessing layer:

● Spelling correction

● Validation

● Safety checks

It is then handled by a four-tier hybrid classifier:

Cache Lookup – instant responses for repeated queries

Rule-Based Pattern Matching – handles ~60–70% of common queries with zero LLM calls

LLM-Based Intent Classification – for complex or ambiguous queries

Fallback Router – ensures safe degradation in edge cases

This design allows the system to determine the cheapest, fastest, and most reliable execution path within milliseconds.

Query Execution Engine

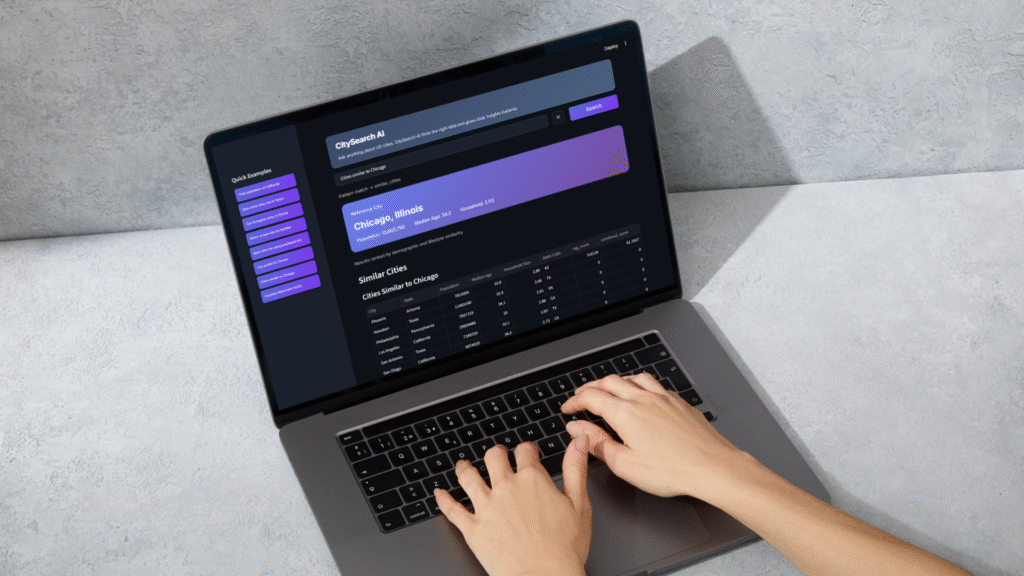

The router supports 15+ distinct query types, including Lookups, Aggregates, Filters, Comparisons, Rankings, Superlatives, Semantic similarity searches. Each query type is routed to the appropriate execution engine:

● SQL → numeric aggregates, filters, comparisons

● ML models → rankings such as “best cities for families”

● RAG semantic search → lifestyle, culture, and similarity queries

This avoids unnecessary LLM usage and significantly improves cost efficiency and reliability.

Performance Optimization

Instead of loading the full embedding index (~30,000 chunks) for every query, CitySearch AI:

● Dynamically loads only the relevant city’s knowledge chunks

● Typically loads 5–10 embeddings per request

This optimization:

● Reduced response latency from >20 seconds to <3 seconds

● Achieved approximately 98% performance improvement

Reliability & Production Considerations

Strict grounding prompts ensure the LLM uses only retrieved data

Graceful degradation falls back to database-driven insights if RAG context is missing

● Multi-level caching:

● SQL results

● Query embeddings

● City-specific RAG chunks

Repeated queries return near-instantly, improving user experience and system stability.

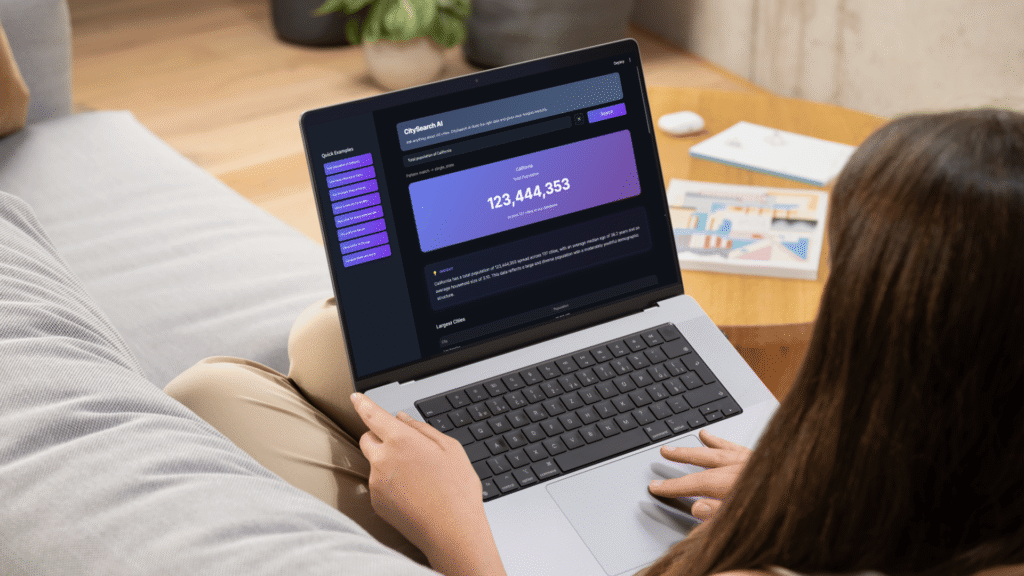

Output & User Experience

Results are delivered as:

● Visual cards

● Tables

● Ranked comparisons

not raw text—making insights accessible to non-technical users.

This positions CitySearch AI as a decision-support system, not a regular chatbot.

Portfolio

Recent Projects

Here’s a look at the projects I’ve worked on, from boosting SEO performance and uncovering insights through marketing analytics to planning digital campaigns and creating content that connects.

MuniLogic CE - Free Trial Multichannel Campaign